Activation

Activation is a python package that contains custom CUDA-based activation kernels, primarily targeting AMD GPUs.

- Currently implemented

FusedAddRMSNorm

A fused operator that combines residual addition (

x + residual) with RMSNorm in a single kernel.Instead of:

y = x + residual hidden_state = rms_norm(y, weight, eps) out = y + some_op(hidden_state)Fused as:

hidden_state, y = fused_add_rms_norm(x, residual, weight, eps) out = y + some_op(hidden_state)

FusedMulPolyNorm

A fused operator that combines PolyNorm with an element-wise multiplication by a Tensor.

Instead of:

y = poly_norm(x, weight, bias, eps) out = y * aFused as:

out = fused_mul_poly_norm(x, a, weight, bias, eps)

FusedMulGroupedPolyNorm (CUDA)

A CUDA-accelerated grouped variant of FusedMulPolyNorm for MoE (Mixture of Experts) models. Fuses the entire PolyNorm computation into CUDA kernels (fwd + bwd), with per-expert weights/bias, in-kernel binary search for expert mapping, optional routing scores multiplication, and hidden_clamp fusion.

Instead of:

for i, expert in enumerate(experts): out[start:end] = fused_mul_poly_norm(x[start:end], mul[start:end], weight[i], bias[i], eps)Fused as:

out = fused_mul_grouped_poly_norm(x, mul, weight, bias, offsets, eps, scores=scores, hidden_clamp=10.0)

Installation

# Local CUDA build (development)

pip install --no-build-isolation -e .

Usage

import torch

import activation

torch.set_default_device("cuda")

poly_norm = activation.layers.PolyNorm(eps=1e-6)

x = torch.randn(10, 10)

print(poly_norm(x))

Performance

- Test cases are from the Motif LLM

- The results can be reproduced using the provided benchmarking tools.

- For details on how to use the benchmarking tools, please refer to the benchmarks README.

- The benchmark results may show fluctuations, especially in the backward pass and when the dimension size is small.

RMSNorm

H100 Results

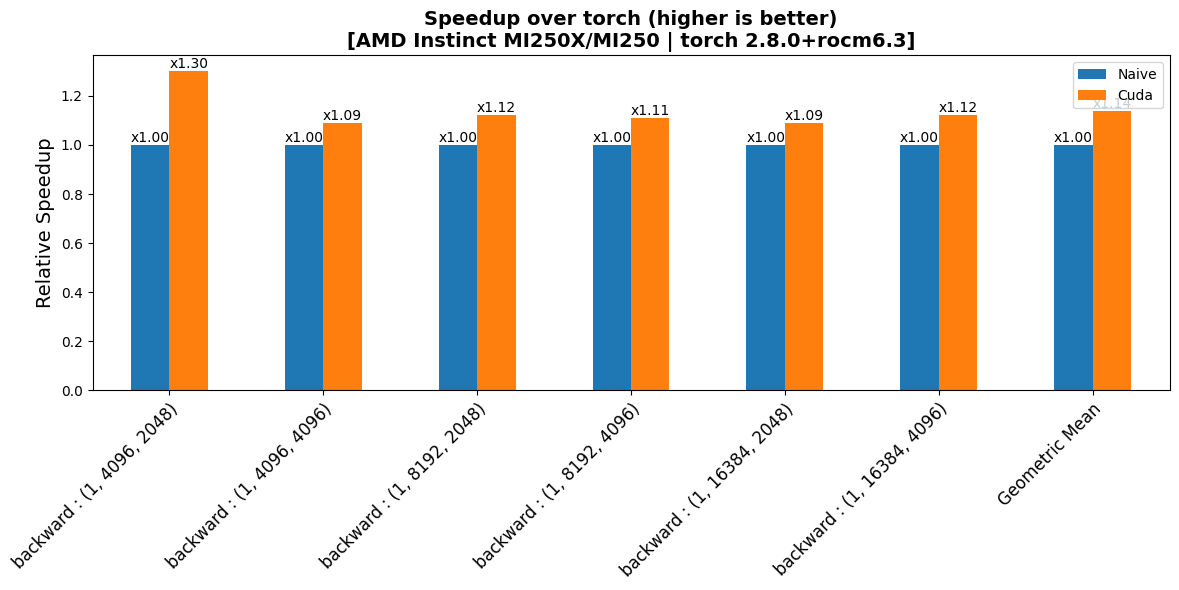

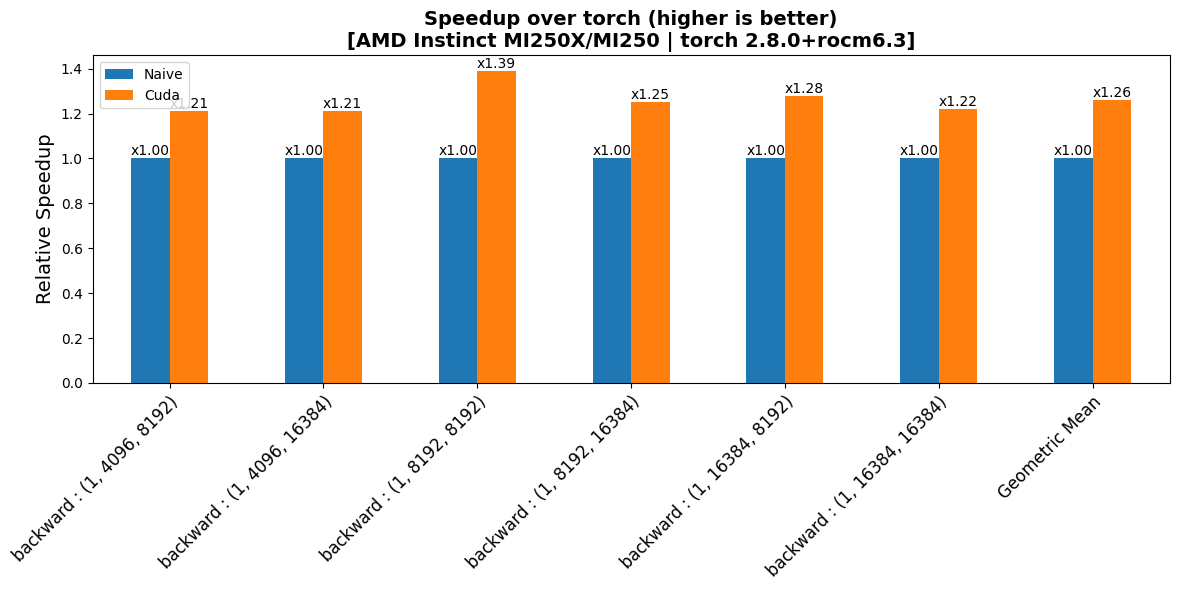

MI250 Results

FusedAddRMSNorm

For fusion case performance, the non-fused baseline was implemented with our custom kernels.

H100 Results

MI250 Results

PolyNorm

H100 Results

MI250 Results

FusedMulPolyNorm

For fusion case performance, the non-fused baseline was implemented with our custom kernels.

H100 Results

MI250 Results

FusedMulGroupedPolyNorm (CUDA)

This kernel is implemented in CUDA C++ (compiled via setup.py). Benchmarks compare three variants: Naive (raw PyTorch reference), Compiled (

torch.compile'd reference), and CUDA (fused CUDA kernel). Benchmark dimension: 1280, 384 experts.Training profile (B200, motif3_seq, lbs=8, seqlen=4K):

CUDA kernel torch.compile Speedup Forward 0.7 ms 2.1 ms 3.0x Backward 1.4 ms 3.7 ms 2.6x

Pre-commit Hooks

This project uses pre-commit to automatically check and format code before commits.

Setup

Install pre-commit:

pip install pre-commitInstall the git hooks:

pre-commit install

Once installed, the configured hooks will run automatically on each commit.

Included Hooks

The following tools are run via pre-commit:

- yapf – Python code formatter

- typos – Spell checker for common typos

- isort – Organizes and sorts Python imports

- clang-format – Formats C++/CUDA code (

--style=file) - pymarkdown – Lints and auto-fixes Markdown files

- actionlint – Validates GitHub Actions workflows

Usage

Run all checks on the entire codebase:

pre-commit run --all-filesRun a specific hook (example: isort):

pre-commit run isort --all-files

- Downloads last month

- 110